Gawbni transforms PDFs, help articles, and internal docs into a structured knowledge layer so every AI response can be verified.

Source-Grounded

Every answer cites source

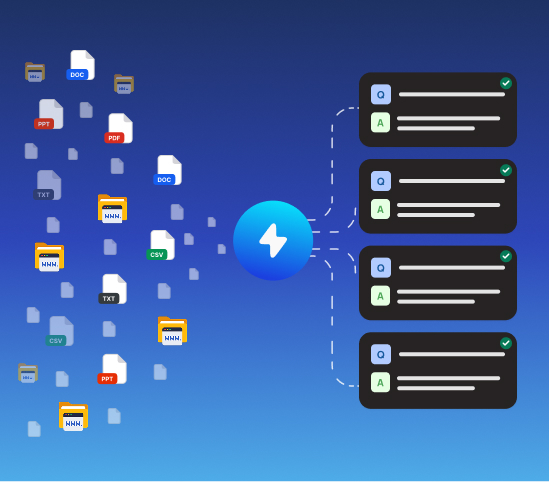

Multi-Format

PDFs, docs, wikis, URLs

Generic AI can sound plausible while being wrong. That creates misinformation, trust erosion, and costly errors.

Without grounding, models can invent facts confidently.

Knowledge spread across many tools leads to inconsistency.

Teams cannot trace why a wrong answer was generated.

Policies and product changes are not reflected quickly enough.

Truth Layer grounds every response in your validated content with citations and confidence signals.

AI answers from your uploaded materials instead of guessing.

Each response can point to exact supporting evidence.

Content changes are reflected immediately.

Unanswered questions show where knowledge needs improvement.

PDF, docs, URLs, and text are unified into one knowledge layer.

Low confidence responses can escalate instead of risking errors.

Add help articles, policy PDFs, internal docs, and URLs.

Content is indexed and organized into a searchable knowledge layer.

Responses are grounded and reference source evidence.

If no reliable source is found, the AI says so and escalates to a human instead of guessing.

PDF, plain text, URLs, and text-based internal documentation are supported.

Immediately after indexing. No model retraining is required.

Yes. Each response includes citations linking to source material.

Questions that cannot be answered are logged so you know exactly what to add next.

Yes. Source grounding and citation are core capabilities of Gawbni.

Upload your documentation and get AI responses backed by facts and citations.

Start free trial